You Already Know How to Do This

If you've opened an AI tool, typed a quick sentence, and felt underwhelmed by what came back, you're not alone. Many research professionals try it once, get generic results, and quietly conclude that AI isn't ready for serious work. The problem isn't the technology. It's the frame.

Most people approach AI like a search engine. They type a command ("summarize this study") and expect magic. When the output is shallow, they assume they lack some technical skill they haven't learned yet. But that's not what's missing. What's missing is a mental model that unlocks what you already know how to do.

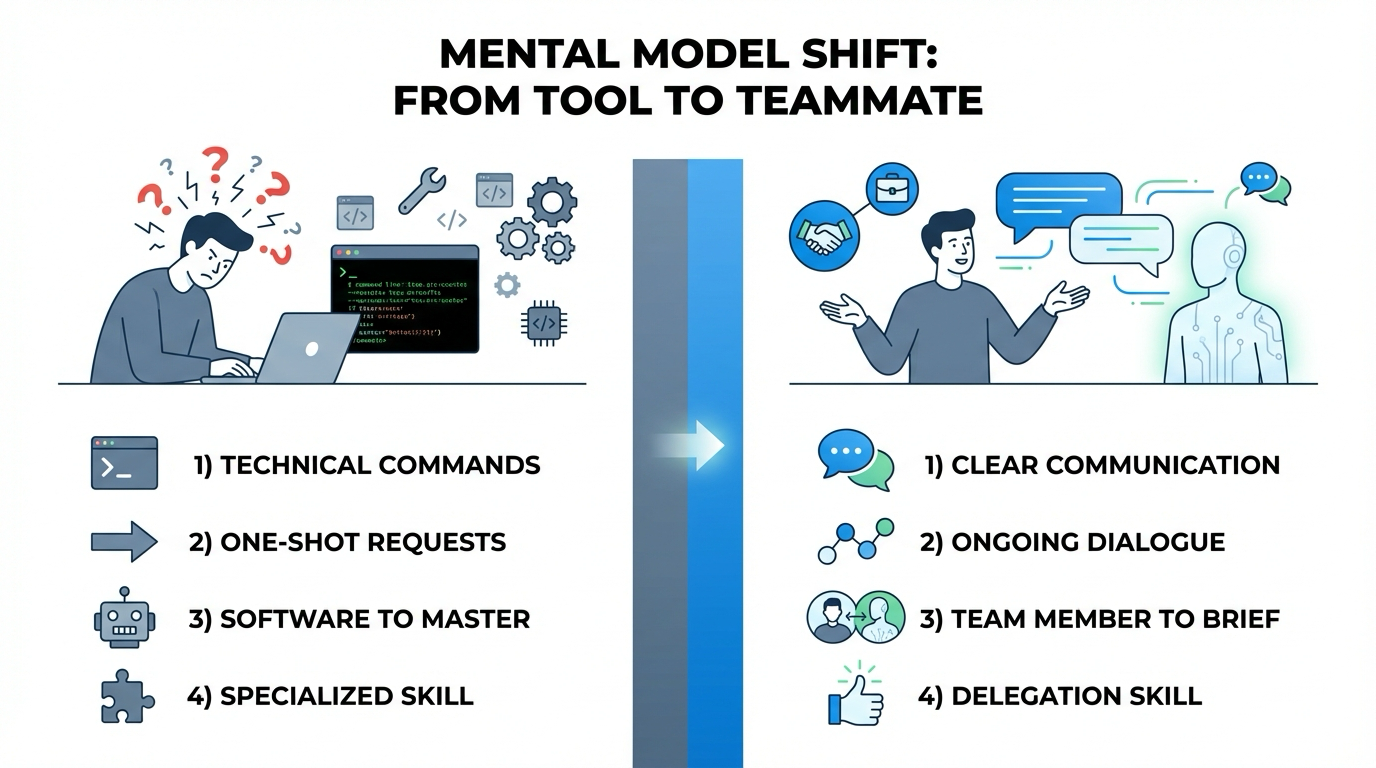

The Shift: From Tool to Teammate

Here's the reframe: Stop thinking of AI as a tool you use. Start thinking of it as a capable but completely context-free colleague you're briefing.

When you hand a task to a new team member, you don't just say "analyse this dataset" and walk away. You explain who you are, what the project is, what stage you're at, and what you need. You describe what good looks like. You share why this matters and what decision it supports. That's not a special skill. That's just how professionals delegate work.

The same instincts apply to AI. The difference between mediocre and genuinely useful output isn't technical expertise. It's communication clarity. If you've ever written a clear email to a colleague asking for help, you already have 80% of what it takes to get great results from AI.

Why the Barrier Feels Technical (But Isn't)

The barrier to AI adoption isn't skill or access. It's culture. When organisations frame AI use as a technical capability, adoption fragments. People worry that using AI makes them look less competent or that they should already know how to "prompt engineer." But the real obstacle is this cultural framing, not the absence of technical skills.

Healthcare organisations that have successfully integrated AI don't treat it as a specialised skill requiring training in technical jargon. They treat it as standard professional communication — like writing a clear brief or setting expectations with a vendor. When leadership models transparent AI use and communicates that it's valued, adoption accelerates.

The 80% You Already Have

Research on workplace delegation confirms that effective delegation is fundamentally a communication skill, not a technical one. It requires clarity about objectives, trust in the process, and regular feedback. These are skills professionals use every day that transfer directly to AI interaction once the framing shifts.

The following sections walk through five specific skills. Each is something you already use with colleagues. Each transfers directly to AI with a small adjustment in how you apply it.

Set the Scene: Why Context Changes Everything

When you brief a new team member on a project, you don't start with the task. You start with the context. You explain the project background, the goal, the audience, and the stage of work. Without that scene-setting, even a capable colleague will produce something generic — because they're working with incomplete information.

AI has the same limitation. It has extensive general knowledge but no knowledge of your specific project, your organisation's priorities, your audience's concerns, or the decision this work will support. When you jump straight to the task without setting the scene, you're asking AI to guess everything it doesn't know.

What Context Actually Does

Context doesn't just add background. It changes what AI prioritises, what it emphasises, and what it leaves out. Without context, AI optimises for generic completeness — covering everything relevant to the topic. With context, AI optimises for your specific situation — emphasising what matters to your audience and decision.

This is the difference between a response that's technically correct and one that's actually useful.

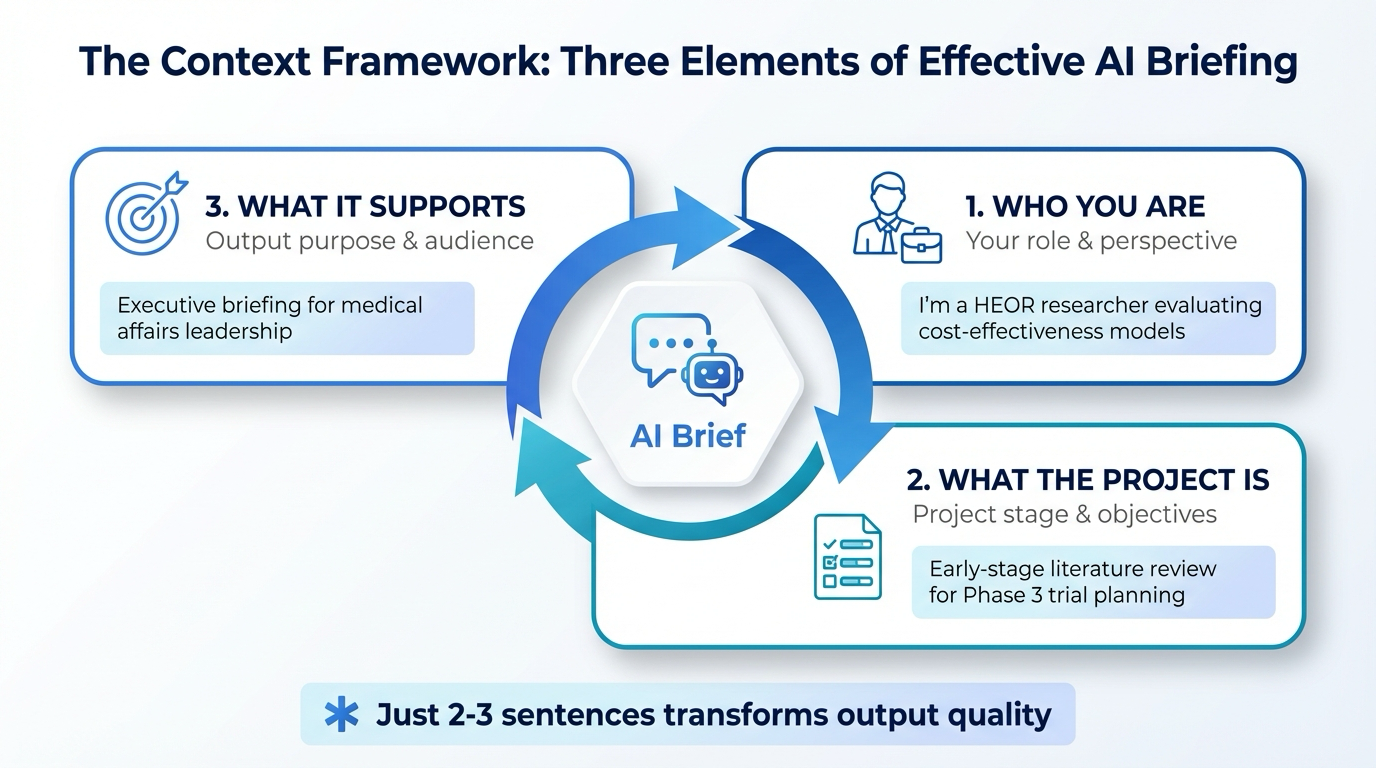

The Three Elements of Effective Context

Effective context setting answers three questions:

- Who am I, and what's my role? — Your professional role shapes what's relevant. A health economist needs different information than a medical writer, even from the same dataset.

- What project am I working on, and what stage am I at? — Early-stage exploration needs different output than final-stage synthesis. The stage determines appropriate depth and format.

- What will this output support or inform? — The decision or deliverable this work supports shapes what to prioritise and what to exclude.

Before & After

Weak Brief (No Context): "Summarise the key findings from these five papers on diabetes interventions."

The AI produces a generic overview. It lists findings from each paper but doesn't help you make a decision because it didn't know what decision you're making.

Strong Brief (With Context): "I'm a health economist preparing a value dossier for payers. We're positioning a new diabetes intervention against the current standard of care. I need to summarise the cost-effectiveness findings from these five papers. Focus on data points that support or challenge a cost-effectiveness threshold of $120k/QALY. The audience is payers who challenged our threshold last year."

Now the AI knows your role, the project, the stage, the decision context, and the audience. The output will prioritise cost-effectiveness data relative to the $120k threshold and flag outliers that might undermine your position.

Start With the Scene, Not the Task

The next time you open an AI conversation, resist the urge to jump straight to the request. Set the scene first. Answer those three questions in two to three sentences. Then make your request. You'll notice the difference immediately — the output will feel less generic and more tailored.

Context isn't extra work. It's the work that makes everything else work better.

Describe What Good Looks Like: Setting Output Expectations

You've given AI the context it needs. Now comes the next step: describing what you actually want to receive. Most people stop too early. They provide context, then say "summarise this" or "analyse this dataset." The AI has background, but it doesn't know what shape your output should take.

Without these specifications, AI defaults to generic formats. And generic rarely matches what you need.

The Instinct You Already Use

You already do this when you delegate to colleagues. When you brief a team member, you naturally say things like:

- "I need a one-page summary, not a full report."

- "Keep it high-level for the VP. She doesn't need methodology details."

- "Focus on cost-effectiveness results, not clinical data."

- "Make it conversational. This is for an internal deck, not a journal."

These aren't technical instructions. They're output expectations: format, depth, tone, and audience. The same instincts work directly with AI.

Four Dimensions of Output Expectations

Specify four dimensions when briefing AI:

- Format and Length — Do you need a one-page summary, slide deck outline, bullet points, narrative report, or comparison table? Be explicit.

- Depth and Detail — High-level or comprehensive? Executive summary or technical deep dive? Set the depth explicitly.

- Tone and Style — Clinical and technical, or plain English for a business audience? Conversational or formal? Name the voice.

- Audience and Purpose — Who will read this, and what do they care about? Give AI the lens to prioritise information.

| Vague Request | Specific Output Expectation |

|---|---|

| "Summarise this study." | "Create a 1-page summary in bullet format. Audience: VP of Medical Affairs. Tone: executive (not technical). Focus: cost-effectiveness vs. comparator." |

| "Analyse this dataset." | "Produce a 2-page narrative analysis. Highlight trends in patient adherence by age cohort. Include 1–2 data tables. Audience: health plan executives." |

| "Draft a summary of these five papers." | "Draft a 500-word summary comparing methods and findings. Format: short paragraphs, not bullets. Audience: HEOR peers familiar with CEA terminology." |

Same task. Different output expectations. Dramatically different result. Specificity upfront reduces iteration cycles later.

Share Your Thinking: The Power of Explaining Why

Most people tell AI what to do. Fewer tell AI why they're doing it. That distinction matters more than most people realise.

When you brief a colleague on a task, you naturally share the reasoning: "We need this framed positively because we're presenting to stakeholders who've been sceptical." That context changes how they approach the task, what they emphasise, and what they leave out. They're not just executing a task; they're using judgment to serve your goal.

The Difference Is Enormous

Without the "why," AI operates as a task executor. With the "why," AI operates as a thinking partner, making choices about emphasis, framing, and structure that align with your actual goal — not just the literal task you described.

The Three Questions That Unlock Judgment

When briefing AI, answer these three questions:

- What decision does this output support? — "This summary will inform whether we pursue this intervention for our formulary submission."

- What's the strategic context? — "We've had pushback on cost data before. This needs to pre-empt those objections."

- What constraints exist? — "This must stay within two pages and avoid language that could create regulatory risk."

These aren't complex additions to your brief. They're one or two sentences that unlock a fundamentally different quality of output.

From Task Executor to Thinking Partner

The professionals getting the most value from AI aren't using it differently in terms of tools or features. They're using it differently in terms of communication depth. They treat AI like a capable colleague who benefits from understanding the goal, not just the deliverable.

That's a communication skill. And it's one you already have.

Iterate Like You Would With Any Draft

Here's where most people leave value on the table: they treat the first AI output as a verdict. If it's not quite right, they either accept a suboptimal result or conclude that AI failed. But the first output is a draft — and drafts are supposed to be refined.

How Iteration Changes Output Quality

When you receive a first draft from a colleague, you don't send it straight to the client. You review it, identify what's working and what needs adjustment, and provide specific feedback. Draft two is usually significantly better. By draft three, it's typically very close to final.

AI follows the same pattern. The first output reflects your initial brief. Feedback allows it to adjust emphasis, restructure content, and refine tone based on your specific needs. Each iteration produces a more tailored result.

What Good Feedback Looks Like

Vague feedback produces vague improvement. "Make it better" tells AI nothing useful. Specific feedback produces specific improvement:

- "The cost data section needs to come first; lead with the financial case."

- "The tone is too technical. Rewrite as if explaining to a non-specialist VP."

- "Cut the methodology section; we don't need it for this executive audience."

Now AI knows exactly what to change: sequencing (cost data first), tone (more conversational), and scope (drop methodology). Specific feedback produces specific improvement.

Why Most People Stop Too Early

Many people approach AI like Google — type a query, get an answer, move on. When the answer isn't perfect, they conclude the tool failed. But AI isn't a search engine returning facts. It's closer to a capable colleague producing work that benefits from feedback.

Research on delegation confirms this: ongoing communication is the secret to successful delegation. If your first AI output is 70% of what you need, that's not failure. That's a strong draft. Provide specific feedback. Get draft two. By draft three, you'll typically have something highly usable.

The professionals getting exceptional results from AI aren't lucky. They're finishing the conversation.

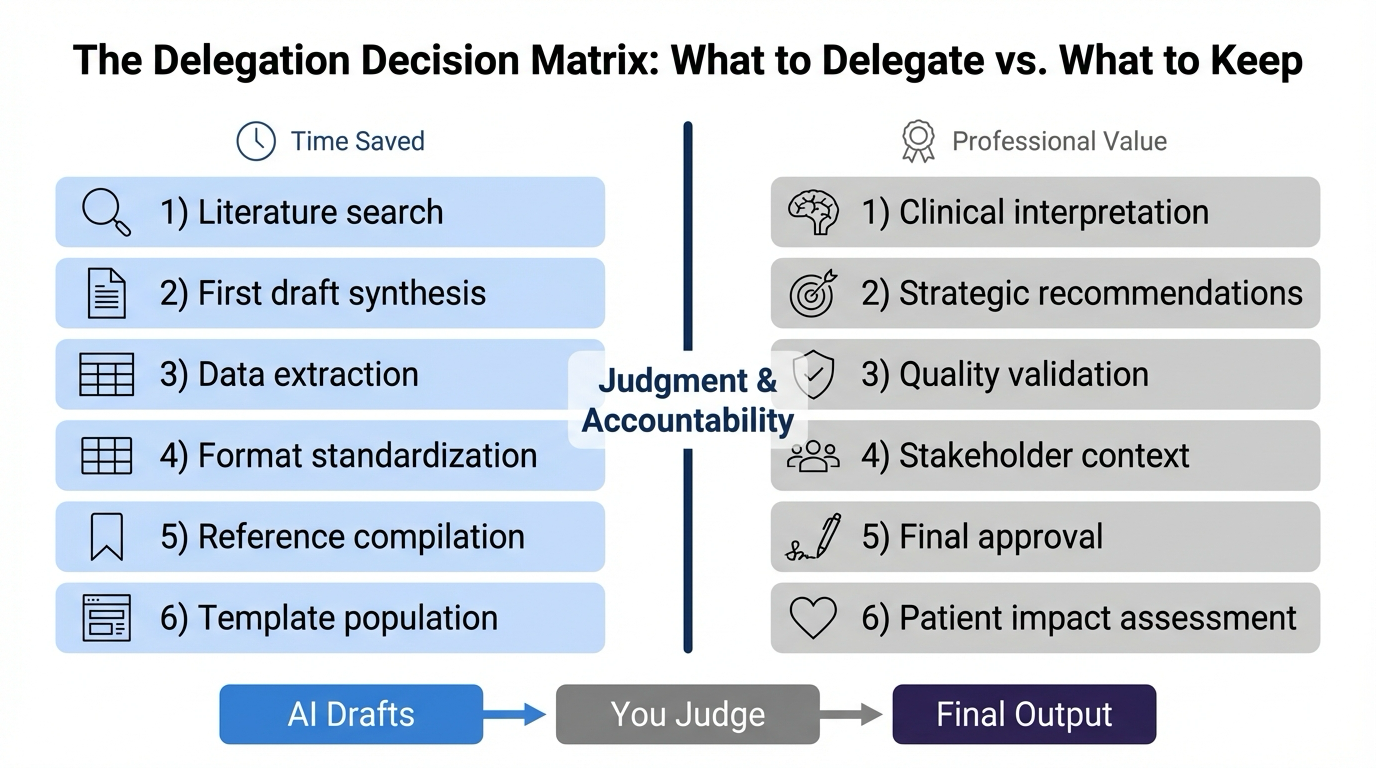

Know When to Take Over: The Boundaries of Good Delegation

You've learned to set context, specify expectations, share your reasoning, and iterate. But here's the final essential skill: knowing when to stop delegating and take over yourself.

The best delegators make clear distinctions about which tasks benefit from delegation and which require their own expertise. AI delegation follows exactly the same principle.

What AI Does Well (and What It Doesn't)

AI excels at structuring content, drafting summaries, synthesising information, and formatting. AI should not handle interpretation (what does this data mean for your formulary decision?), judgment calls (which studies are most relevant to your context?), or professional sign-off (is this conclusion defensible?).

The line isn't arbitrary. It's the same line you draw when delegating to a colleague: you hand off execution, but you retain judgment.

A Practical Example

You're preparing a value dossier. Here's how to divide the work:

Delegate to AI:

- Generate formatting options for presenting cost-effectiveness data.

- Draft the methodology section summarising your economic model structure, data sources, and key assumptions.

- Compile all references in APA format and verify completeness.

Keep for yourself:

- Review the formatting options and choose which best serves your payer audience.

- Read the methodology draft and determine if it accurately represents your model.

- Verify references are accurate and citations support the claims made.

The Real Goal

The point of AI delegation isn't to remove yourself from the work. It's to remove yourself from the parts that don't require your judgment.

When you spend two hours formatting a slide deck, that's two hours you're not spending interpreting data or advising stakeholders. When AI handles the formatting in ten minutes, you have two hours back for the work only you can do.

The goal isn't to automate your thinking. It's to amplify it.

Good delegation has always been about knowing what to hand off and what to keep. You already make these decisions with colleagues every day. Now you're applying the same instinct to AI.

Download the Full Paper

Get the PDF version for offline reading and sharing with your team.

Portions of this document were drafted with the assistance of an LLM. Developed by Aide Solutions LLC.