The Terminology Problem

As artificial intelligence tools become increasingly prevalent in health economics and outcomes research, there's a surge of excitement — and confusion — around terms like "AI agents" and "workflows." At conferences and in publications, these buzzwords are thrown around with little consistency, leading to unrealistic expectations and poor tool selection.

Having spent time diving into the technical literature, including recent guidance from Anthropic on building effective AI systems, I believe it's time to clarify what these terms actually mean and when they might be useful for HEOR work.

The confusion is understandable. "AI agent" has become a catch-all term that different people use to describe vastly different capabilities. Some envision fully autonomous systems — like Claude Code or Gemini DeepResearch — that can handle complex, multi-hour tasks independently. Others use the same term for simple chatbots that answer questions about clinical guidelines. This inconsistency isn't just semantic: it leads to mismatched expectations and inappropriate tool selection.

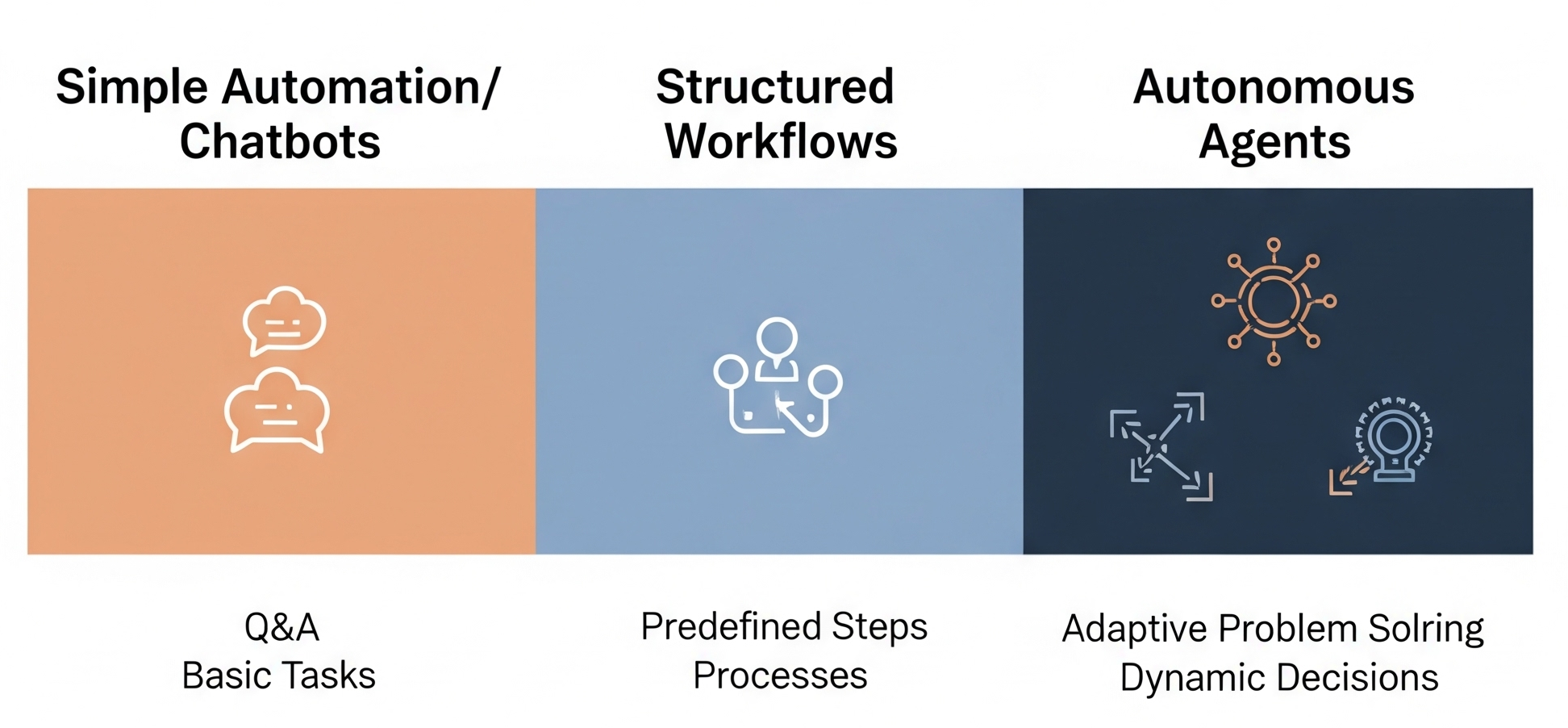

The reality is that there's a spectrum of AI capabilities, and understanding this spectrum is crucial for making informed decisions about when and how to integrate these tools into our research workflows.

A Framework for Understanding: Research Methodologies as Analogy

To cut through the confusion, I find it helpful to think about AI systems the same way we think about research methodologies. Just as we choose between quantitative and qualitative approaches based on our research questions, we should choose AI tools based on the nature of our tasks.

AI Workflows are like systematic reviews. They follow predefined steps: search strategy, screening criteria, data extraction protocols, and analysis frameworks. Each step is predetermined, creating a predictable and reproducible process. The computer follows a structured path that we've defined in advance.

AI Agents are like qualitative research. Here, the researcher (or in this case, the AI) adapts their approach based on emerging themes and unexpected findings. The system makes dynamic decisions throughout the process, potentially changing direction based on what it discovers. This flexibility comes with less predictability but greater adaptability.

When Each Approach Makes Sense in Health Research

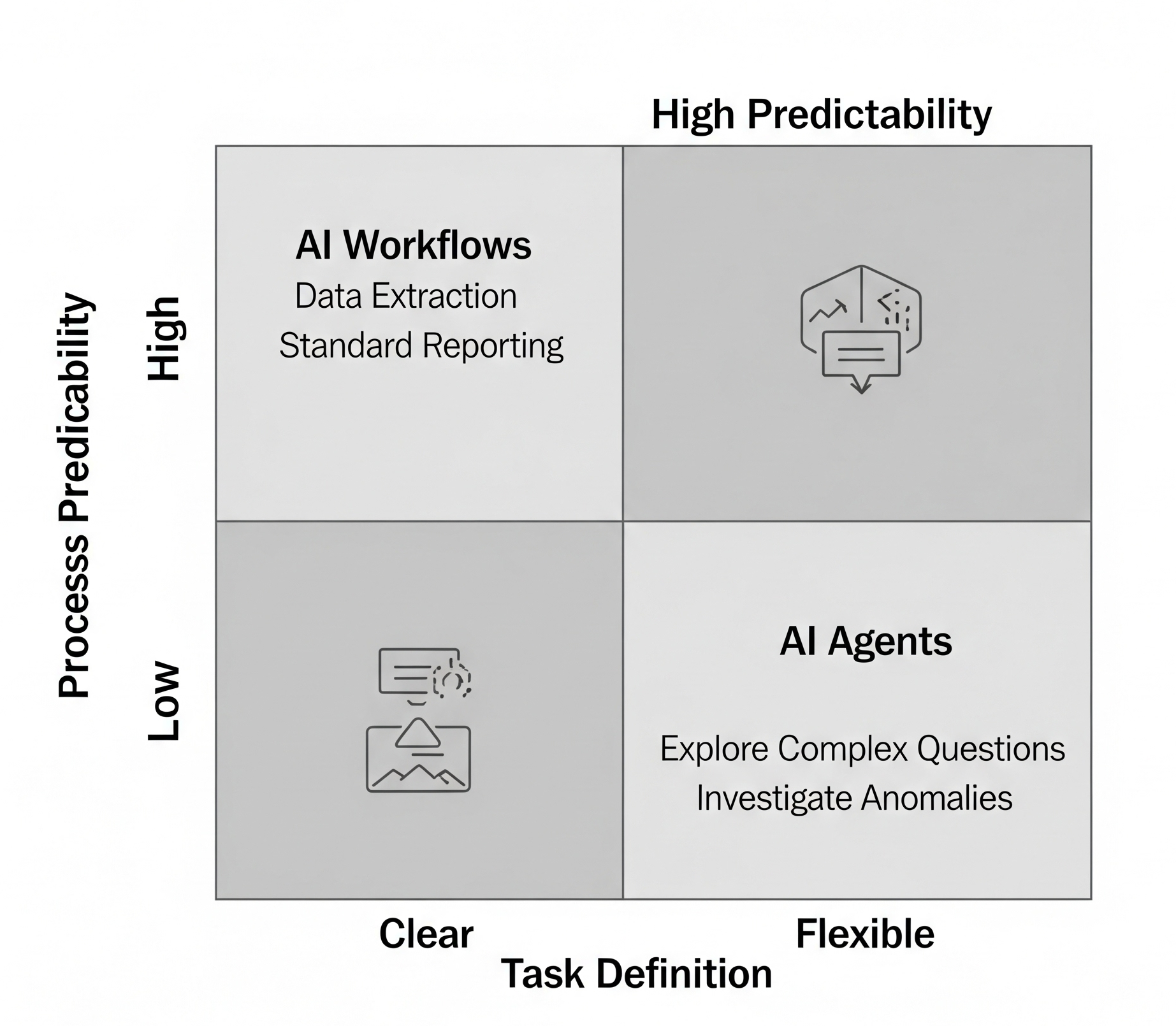

Understanding this distinction helps us choose the right tool for the job. Workflows excel when we have well-defined tasks with clear parameters:

- Literature reviews with established inclusion and exclusion criteria

- Data quality checks following predetermined validation rules

- Report generation with standard sections, such as budget impact models or health technology assessments

- Cost-effectiveness analyses using established frameworks like those recommended by health technology assessment agencies

These tasks benefit from the consistency and reliability that come with predefined processes.

Agents, on the other hand, shine when we need flexibility and adaptive problem-solving:

- Exploring complex research questions that require following multiple investigative paths

- Adapting analysis approaches based on emerging data characteristics or unexpected patterns

- Investigating anomalous findings that require flexible follow-up and creative problem-solving

- Synthesizing evidence across diverse sources with varying methodologies and reporting standards

Consider the difference between asking an AI to "extract patient demographics from 100 clinical trial papers and standardize the format" versus "investigate why our cost-effectiveness model results differ significantly from similar studies and suggest methodological adjustments." The first is a perfect workflow task — structured, predictable, and well-defined. The second requires the kind of adaptive investigation that agents can provide.

The Reality Check: Start Simple, Add Complexity Thoughtfully

Here's what the technical literature makes clear, but marketing materials often obscure: most research tasks don't require sophisticated "agents." Enhanced search capabilities, structured prompts, and simple automation often provide 80% of the benefit at 20% of the complexity and cost.

This matters because complexity has real costs. More sophisticated AI systems are typically more expensive to run, harder to debug when they make errors, and more prone to unexpected behaviours. They also require more technical expertise to implement and maintain effectively.

The key is to start with the simplest solution that meets your needs. If you need to extract specific data points from a large number of papers, begin with a structured workflow approach. Only consider agent-based solutions when the task genuinely requires adaptive decision-making that can't be predetermined.

Implications for Health Researchers

As our field continues to integrate AI tools, we need to resist the temptation to chase the latest technological buzzwords. Instead, we should approach AI the same way we approach any research tool: by carefully matching the tool's capabilities to our specific needs.

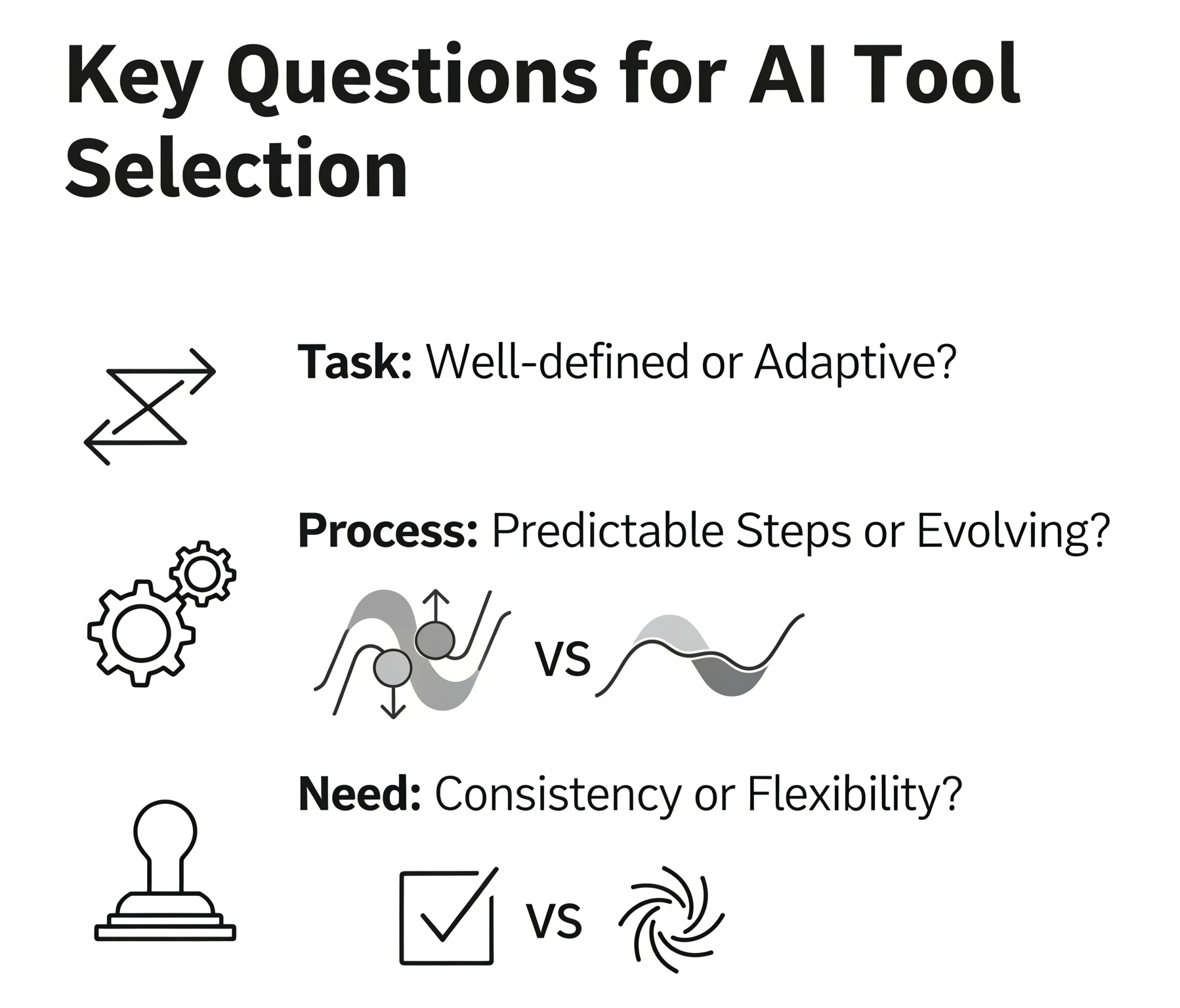

This means asking ourselves fundamental questions: Is this task well-defined with clear parameters, or does it require adaptive problem-solving? Can I predict the steps needed in advance, or will the approach need to evolve based on what we discover? Do I need the consistency of a protocol-driven approach, or the flexibility of exploratory investigation?

The growing interest in AI within health economics and outcomes research is exciting and warranted. These tools have genuine potential to enhance our research efficiency and capabilities. But realising this potential requires moving beyond buzzwords to understand what these systems actually do — and more importantly, when simpler approaches might serve us just as well.

By thinking clearly about our research needs first and technological capabilities second, we can make informed decisions that truly advance our work rather than simply adopting the latest trend. After all, the goal isn't to use the most sophisticated AI available — it's to answer important health research questions more effectively.

Download the Full Paper

Get the PDF version for offline reading and sharing with your team.

This piece was written with the support of GenAI. The author reviewed, edited, and takes full responsibility for the content and conclusions presented.